Even though the cubesat LilacSat-1 was launched more than a year ago, I haven’t played with it much, since I’ve been busy with many other things. I tested it briefly after it was launched, using its Codec2 downlink, but I hadn’t done anything else since then.

LilacSat-1 has an FM/Codec2 transponder (uplink is analog FM in the 2m band and downlink is Codec2 digital voice in the 70cm band) and a camera that can be remotely commanded to take and downlink JPEG images (see the instructions here). Thus, it offers very interesting possibilities.

Since I have some free time this weekend, I had planned on playing again with LilacSat-1 by using the Codec2 transponder. Wei Mingchuan BG2BHC persuaded me to try the camera as well, so I teamed up with Mike Rupprecht DK3WN to try the camera this morning. Mike would command the camera, since he has a fixed station with more power, and we would collaborate to receive the image. This is important because a single bit error or lost chunk in a JPEG file ruins the image from the point where it happens, and LilacSat-1 doesn’t have much protection against these problems. By joining the data received by multiple stations, the chances of receiving the complete image correctly are higher.

The pass we selected started at 07:53 UTC on 2018-09-01. I operated portable from the street using a handheld Arrow satellite yagi, a FUNcube Dongle Pro+ to receive, a Yaesu FT-2D to transmit through the FM/Codec2 transponder, and a laptop running gr-satellites. I used a WiMo diplexer between the FUNcube Dongle and the antenna to prevent densense from the 2m uplink.

To maximize our chances of receiving the images, I had programmed all SatNOGS stations in Europe to record the pass.

At the start of the pass I transmitted through the FM/Codec2 transponder to check that all my equipment was working and I could hear myself through the transponder. I called Mike just in case he had his station set up to use the transponder and could listen to me and reply. Mike didn’t come back, but suddenly I saw the satellite starting to transmit an image, as Mike had commanded it.

I was able to receive most of the image except for a brief fade in the middle. However, as I’ve already mentioned, even missing a small part of the image has catastrophic results.

I was confident that I could patch the missing data with whatever Mike or the SatNOGS stations had received, and I had only 3 minutes left until loss of signal, so after the image downlink had ended I used the Codec2 transponder to thank Mike and tell him that I judged this to be a success and that we could probably put together a complete image. For me the pass was essentially over.

Since passes are West to East, Mike in Germany still had a few minutes left of pass, so I saw that he had commanded the downlink of another image. I tried to receive as much as possible, but lost the beginning of the image. Without the start of the image, you miss the JPEG header, so the image can’t be displayed.

After the pass I exchanged results with Mike. He had lost the header of the first image but was able to receive the beginning of the second image correctly. For him this had been a difficult pass due to low elevation and interference.

I also checked the SatNOGS stations. SV1IYO/A hadn’t received any trace of LilacSat-1’s signal, while uhf-satcom‘s station had a weak signal with lots of fading. None of this seemed very useful, so I would have to piece together the images using only Mike’s recording and mine.

I should say a few words about combining LilacSat-1 images from different receivers to get a complete image, in case someone is interested in attempting this. You can read more details about the image protocol here. The JPEG images are sent in 64 byte chunks (except for the last chunk, which can be shorter). Each of this chunks is either received completely or not at all. Received chunks are stored in the JPEG file in their corresponding position. Missing chunks are filled in with zeros, so they are easy to detect.

There are a couple of twists to this scheme: First, if a chunk is received, there is no guarantee that it has no bit errors. Usually most chunks are correct, but some of them might be corrupted. Second, since there is no protection against bit errors, sometimes the offset field of an image packet is corrupted. This usually causes the chunk to be written at a ridiculous large offset, making the JPEG file much larger. This is easy to correct by trimming the file to its correct size.

The problem about having no protection against bit errors happens because the CSP packets from LilacSat-1 use a non-standard CRC, so it can’t be checked in gr-satellites. The CRC is checked in the Harbin server to prevent incorrect telemetry from entering in the database, but this is quite inconvenient for receiving images locally, since it prevents a good automated way of merging partial images from different sources. A majority voting method can still be used to spot corrupted chunks, but this is only useful if we are merging from more than two sources.

An extra remark is that it usually helps to run the same recording a couple of times through the decoder, perhaps using sightly different frequency offsets. The set of chunks obtained in each run can be different, and this may help you complete an image if you have only a few missing chunks.

After some work extracting the data from Mike’s recording and mine and selecting by hand some corrupted chunks, I have been able to recover both JPEG images completely (except for a very small piece at the end of the second image).

The data extracted from the recordings has been combined in this Jupyter notebook. I won’t comment much on it, since this is definitely not the way things should be done, so I’m just linking it here for the sake of completeness. As you can see, I find it useful to see what chunks of the image are missing to see if my recovery has any chance at succeeding, but this procedure is quite tedious.

Regarding the Codec2 transponder, I found it very easy to get in with just 5W, and the quality of the audio is more or less okay. One thing that I’ve noted is that it takes a few hundreds of milliseconds to activate the transponder, so if one is not careful, the beginning of the transmission is cut. One should press the PTT and perhaps wait a second before speaking.

The Codec2 audio obtained from my recording can be played back below. The most interesting part starts at 01:45. Most of the garble you hear is produced during the image downlink. When LilacSat-1 is transmitting a telemetry packet or an image, Codec2 frames are also sent even if no one is using the transponder, and these spurious frames are not squelched.

The raw Codec2 data can be found here. This can be played back using

<lilacsat1.c2 c2dec 1300 - - | play -t raw -r 8000 -e signed-integer -b 16 -c 1 -

or converted to WAV by doing

<lilacsat1.c2 c2dec 1300 - - | sox -t raw -r 8000 -e signed-integer -b 16 -c 1 - -t wav lilacsat1_audio.wav

This needs c2dec, from the codec2 library.

One interesting thing about the Codec2 transponder that I think is not well known is that the transponder is completely independent from the image downlink. Each of them uses a different virtual channel and has allocated its own bandwidth (1300bps for the Codec2 channel and 3400bps for the image and telemetry channel; see here for more details). Thus, using the Codec2 transponder while an image is sent doesn’t disturb the image downlink at all. The image will take exactly the same time to download.

With this in mind, I find it very interesting to collaborate between several stations to take and receive images, using the Codec2 transponder to coordinate the sending of commands and discussing the reception while the pictures are being downlinked. This could be a more enjoyable activity than the usual fast QSOs of an FM satellite, but LilacSat-1 has never gained much popularity. I think we should promote this satellite and mode of operation and help people with the technical difficulties they may find in setting up their station (especially with the software).

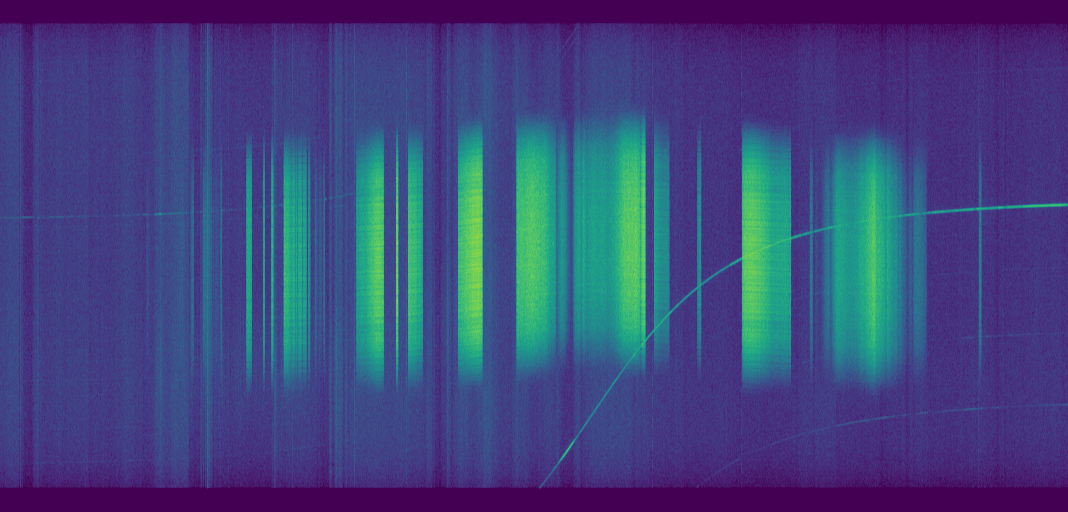

My recording of LilacSat-1 can be downloaded here (52MB). It is in WAV format and it can be decoded directly with gr-satellites. The waterfall for this recording is shown below. Note the fading during the image transfers. An interesting detail is that it is possible to distinguish the Codec2 downlink from the image downlink just by looking at the spectrum. When only the Codec2 downlink is running, KISS idle c0 bytes are transmitted in the telemetry and image channel. Since no scrambler is used (only convolutional encoding), this creates some subtle spectral patterns. In contrast, the spectrum of the image downlink is more homogeneous.