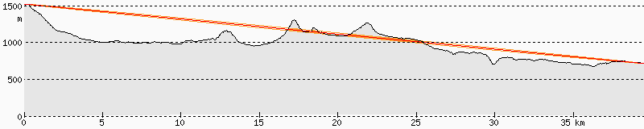

On July 13, the Vega-C maiden flight delivered the LARES-2 passive laser reflector satellite and the following six cubesats to a 5900 km MEO orbit: AstroBio Cubesat, Greencube, ALPHA, Trisat-R, MTCube-2, and CELESTA. This is the first time that cubesats have been put in a MEO orbit (see slide 8 in this presentation). The six cubesats are very similar to those launched in LEO orbits, and use the 435 MHz amateur satellite band for their telemetry downlink (although ALPHA and Trisat-R have been declined IARU coordination, since IARU considers that these missions do not meet the definition of the amateur satellite service).

Communications from this MEO orbit are challenging for small satellites because the slant range compared to a 500 km LEO orbit is about 10 times larger at the closest point of the orbit and 4 times larger near the horizon, giving path losses which are 20 to 12 dB higher than in LEO.

I wanted to try to observe these satellites with my small station: a 7 element UHF yagi from Arrow antennas in a noisy urban location. The nice thing about this MEO orbit is that the passes last some 50 minutes, instead of the 10 to 12 minutes of a LEO pass. This means that I could set the antenna on a tripod and move it infrequently.

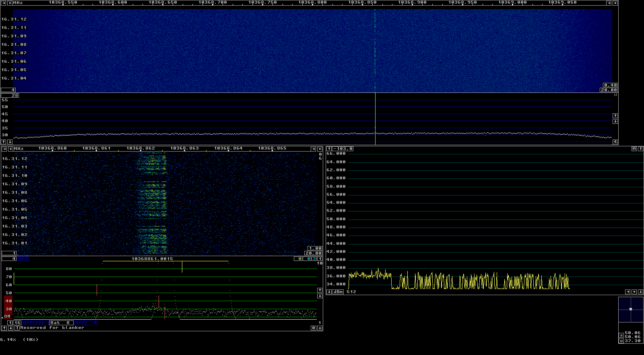

As part of the observation, I wanted to perform an absolute power calibration of my SDR (a USRP B205mini) in order to be able to measure the noise power at my location and also the power of the satellite signals power, if I was able to detect them.